If late-night LeetCode sessions and constant LinkedIn job scrolling are part of the routine, the gap between “preparing” and “actually converting to offers” remains very real. Leeco AI positions itself directly in that gap: part in‑browser coding mentor, part AI‑driven job search autopilot, and entirely focused on a developer’s journey from DSA basics to offer letter. Instead of being just another chatbot, it sits inside active tabs, observes what’s happening, and quietly nudges toward more effective prep and smarter applications.

Leeco AI in one sentence (and why it matters)

Leeco AI functions as a specialised co‑pilot that understands three domains deeply: coding interview patterns, the way developers study online, and how modern tech hiring funnels operate. Rather than juggling a generic chatbot, scattered bookmarks, and chaotic job boards, everything is brought into a single environment that helps reason through a LeetCode medium and, in the same ecosystem, tailor and send resumes to relevant roles.

To see whether this is smart packaging or genuine utility, it helps to separate Leeco into two personas: the learning companion and the job-search autopilot.

Two faces of Leeco AI

| Pillar | What it does |

| Learning Companion | Sits beside LeetCode/YouTube/blogs, gives hints, reviews code, runs mock interviews |

| Job-Search Autopilot | Scans jobs, tailors resumes, assists referrals, tracks applications |

Design philosophy is straightforward: show up where developers already spend time, then layer intelligence on top of natural behaviour. During “prep mode”, it behaves like a contextual tutor. During “job hunt mode”, it shifts into an AI job agent trying to maximise relevant applications without demanding constant manual effort.

How Leeco works as a learning companion

After installing the Chrome extension and opening a LeetCode problem, the difference from a typical ChatGPT workflow is immediate. Instead of copying the entire problem into a separate window and re‑explaining context, a slim sidebar appears in the same tab. It recognises the current problem, reads the description, and can access the current attempt (if access is granted).

Interaction usually begins with gentle nudges: a request for a high-level hint rather than a full solution. Responses speak in the language of patterns and trade‑offs: perhaps suggesting a sliding window instead of nested loops or reminding that a hash map can break an O(n2)barrier. When a block persists, the assistant can step through code line by line, check edge cases, or explain why a particular test case keeps failing.

The same experience extends to YouTube and technical blogs. During a dynamic programming video, for example, an instructor might rush through “store subproblems in a table”. With Leeco active, that segment can be paused, highlighted, and re‑explained with a simpler example or linked to a familiar pattern. Learning remains in a single tab, but a second teacher is effectively present in the margins.

Over time, that context‑aware behaviour becomes more significant than any individual feature. Interaction feels less like chatting with a detached model and more like working alongside a tutor embedded in the workspace, one that remembers prior sessions and recurring stumbling points.

Roadmaps, repetition and personalised nudges

Serious interview prep is driven by repetition: patterns revisited, weaknesses addressed, and topics cycled until mastery emerges. Leeco quietly tracks attempted problems, frequent areas of confusion, and categories that tend to be avoided. Based on this footprint, it can suggest which problem types deserve priority next or which topics merit another focused cycle.

Instead of following an inflexible, generic DSA sheet, the prep flow bends slightly around actual behaviour. A learner cruising through arrays and strings might be nudged into graphs earlier than expected. Another who consistently struggles with dynamic programming will see that topic resurfacing more frequently in recommendations. This doesn’t eliminate the need for deliberate planning, but it offloads some of the meta‑decision work that usually sits in the back of the mind.

There is a trade‑off. Reliance on hints too early and too often can create an illusion of progress, where guided problem solving looks strong while independent struggle remains weak. Leeco provides an intelligent set of crutches; choosing when to stand without them remains a human decision.

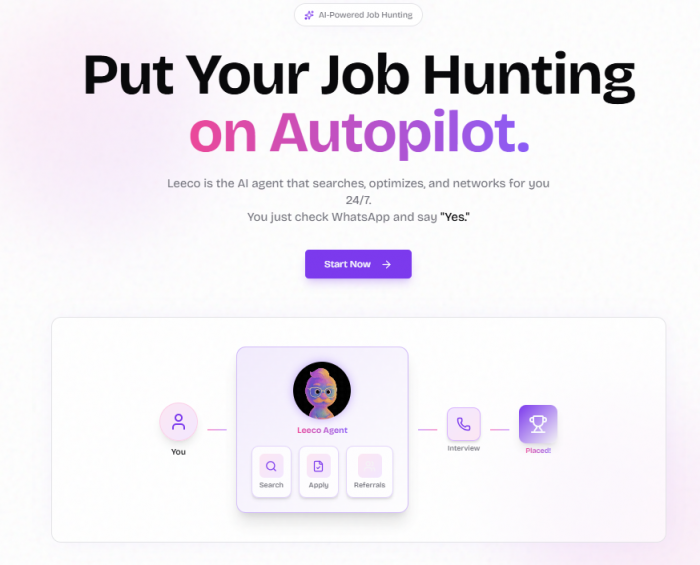

From prep to pipeline: the job-search autopilot

Most tools end their responsibility at “help pass interviews.” Leeco’s second persona is more ambitious, aiming to act as an AI agent that keeps a job hunt alive while study or work continues.

At a high level, the system collects target roles, locations, and some profile context, then uses a base resume as input. From there, it continuously scans popular job portals and company career pages, searching for roles that align with those parameters and filtering out obvious mismatches. Rather than manually combing through feeds every night, a curated stream of roles arrives through a dashboard and often via WhatsApp.

The key innovation is not only in surfacing opportunities but in attempting to adapt the candidate profile to each opening.

Resume tailoring and “intelligent apply”

In 2026, a single static resume sent everywhere rarely cuts it. Leeco’s intelligent apply feature is built around that reality. For each target job, the system analyses the job description, compares it to the base CV, and then proposes a tailored version that foregrounds the most relevant skills, keywords, and experiences.

Practically, this shifts effort away from repetitive micro‑editing and toward higher-level judgement. Instead of rewriting bullet points from scratch every time, the primary task becomes evaluating whether the AI‑generated version accurately represents experience and strengths. When the edits make sense, the resume can be accepted with minimal adjustments. When something feels misaligned, manual corrections step in before submission.

Claims around learning from patterns in successful resumes for similar roles mean the system is not simply keyword‑stuffing but also adjusting tone and emphasis to look more compelling to automated filters and hiring teams. That said, any black‑box optimisation deserves careful oversight; productivity gains do not remove the need for personal scrutiny.

Referrals, outreach, and the networking edge

The networking side of job search, especially cold outreach for referrals is often the most draining. Leeco attempts to reduce this friction by helping identify employees at target companies and drafting outreach messages tuned to both company context and candidate background.

The real improvement lies in escaping the “blank page” problem. Instead of starting every referral request from zero, candidates receive a considered draft that can be refined and personalised. When used with judgement, this can significantly expand reach without dropping into spammy behaviour.

That ethical line is very real. Allowing any system to blast generic messages to dozens of employees risks rapid brand damage. Leeco provides leverage; responsible professionals draw the line on how aggressively that leverage is applied.

Manual vs assisted: a clearer side‑by‑side view

A side‑by‑side comparison makes the job-search proposition easier to visualise.

| Journey step | Traditional approach | With Leeco AI involved |

| Discovering roles | Manually search multiple portals | Continuous scanning and curated suggestions |

| Tailoring resume | Edit for each job from scratch | AI‑adapted resumes for review |

| Applying | Refill similar forms repeatedly | Streamlined, semi‑automated application flow |

| Securing referrals | Ad‑hoc LinkedIn DMs | AI‑drafted, targeted outreach to refine |

| Tracking progress | Spreadsheets and memory | Single dashboard of statuses and touchpoints |

What remains unchanged is the need for genuine skills, coherent narrative, and sensible targeting. What changes is the amount of friction standing between an interested candidate and a well‑crafted application.

Day‑to‑day reality: impact in practice

On the learning side, impact shows up in behavioural patterns more than in flashy one‑off moments. Consistent access to context‑aware hints makes it easier to tackle “just one more problem.” Embedded code review and stepwise explanations mean fewer detours into unstructured web searches. Over weeks, those marginal gains accumulate into deeper familiarity with patterns and a larger solved set.

For job search, outcomes are inherently probabilistic. Better role discovery, faster resume customisation, and a smoother path to referrals collectively increase the number of high‑quality applications entering the funnel. That does not equate to guaranteed offers no tool can supply those but it does expand opportunity surface area while reclaiming time from administrative grind.

The key is to treat Leeco as something measurable. Tracking metrics like problems solved per month, interview invitations during active autopilot periods, or hours recovered from manual job-board trawling gives a grounded view of its contribution rather than relying on vague impressions.

UX, friction points, and practical limits

Usability is where Leeco leans heavily into proximity. The extension installs quickly, the sidebar blends into LeetCode and similar sites without feeling intrusive, and the interaction model asks, get context‑aware reply feels intuitive after a single session.

Not everything is frictionless. Chrome‑only support can be a blocker in organisations with locked-down setups or for developers committed to alternative browsers. On resource‑constrained machines or especially heavy pages, initial sidebar load may introduce a small delay. And, as with any AI layer, occasional off‑target answers or oversimplified explanations are part of the territory.

Subscriptions add another practical layer. A free tier allows experimentation, but sustained, serious use typically pushes users toward paid plans. Standard SaaS discipline still applies: understand feature boundaries for each tier, monitor renewals, and ensure that the subscription cost tracks reasonably against realised value, not just perceived convenience.

Value, pricing, and phase‑dependent payoff

The question “Is it worth it?” depends heavily on career stage and current goals.

For final‑year students wrestling with DSA and lacking a clear plan, the learning companion alone can justify a modest monthly cost during intense prep windows. Early‑career engineers undergoing a focused job switch may find that resume tailoring and continuous job scanning pay for themselves in regained time and additional interviews. Highly senior professionals with strong networks and bespoke search strategies might view the autopilot as optional useful, but not central.

A practical decision rule is simple: estimate whether the tool is likely to result in at least one extra serious interview or five to ten hours of reclaimed time per month. If the answer is yes and pricing remains reasonable, the equation makes sense during “grind” phases. Once that phase passes, downgrading or pausing becomes a rational step.

Privacy, control, and ethical boundaries

Any platform handling both code and career data demands careful thought. Leeco’s learning features interface with problem statements, source code, and snippets from educational content, while the job-search module processes resumes, job history, and sometimes outbound messages.

Prudent adoption follows a staged approach. Start with low‑risk data: generic practice problems, non‑sensitive code, and safe learning scenarios. As behaviour becomes better understood, then expand into more sensitive areas like resumes and outreach. Throughout, manual review of AI‑generated resumes and messages remains critical.

Ethically, referral automation is the sharpest edge. Responsibly used, it simply helps articulate thoughtful, targeted requests at scale. Abused, it turns into high‑volume, low‑quality outreach that irritates the very people whose help is being sought. Tooling can propose; professional judgment must dispose.

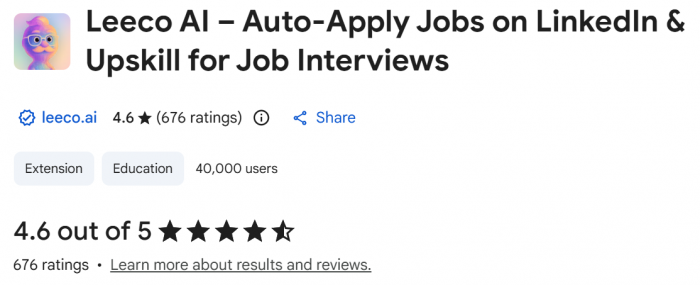

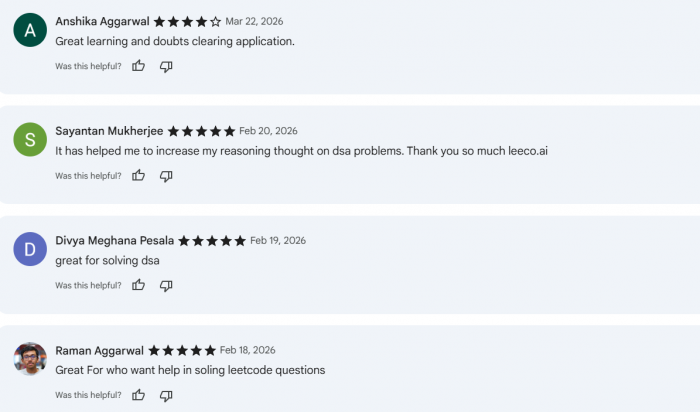

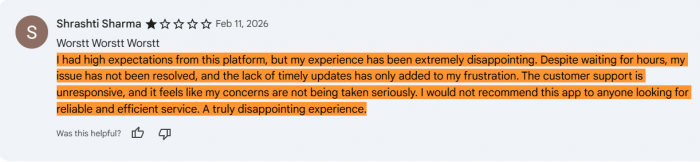

Real user feedback: Reddit and Chrome Web Store

Public sentiment around Leeco AI is largely shaped by two places developers trust: Reddit threads and the Chrome Web Store.

On the Chrome Web Store (and tracking sites like Chrome‑Stats), Leeco AI shows a user base of around 20,000+ with an average rating roughly in the 4.6 - 4.8★ range, backed by 400+ ratings.

Reviews consistently highlight that the extension makes LeetCode and DSA practice easier to navigate, praising structured hints, code review, and the way it focuses on approach instead of pasting full solutions. Learners also appreciate quiz‑style interactions after videos and describe the UI as beginner‑friendly, though a few mention occasional lag when many Chrome tabs are open and would like more frequent model updates.

Reddit discussions add a bit more nuance. In prep and AI‑tooling threads, Leeco is generally described as “worth using if interview prep is serious”, especially because it behaves like a mentor embedded in the browser rather than a detached chatbot. At the same time, some comments raise familiar caveats: dependence on Chrome, the need to watch subscription management closely, and the reminder not to lean so hard on AI hints that independent problem‑solving suffers. reddit

Overall, the tone on both platforms aligns: a high‑rated, widely used tool that genuinely improves day‑to‑day prep, with the usual warnings around performance quirks and responsible usage.

Who gains the most and who might not

Leeco AI aligns particularly well with three overlapping groups:

● Students and fresh graduates focused on product-based company interviews.

● Early‑career developers planning a structured job switch with limited time for admin work.

● Regular LeetCode or competitive programming practitioners seeking a coach‑like assistant rather than a pure answer generator.

For developers with already-refined, manual systems curated problem lists, stable referral networks, and carefully controlled job targeting the incremental benefit may be smaller. Those wary of browser extensions, remote AI processing, or any outsourcing of application steps will likely prefer a more traditional stack of tools.

The bottom line: a serious co‑pilot, not a shortcut

Leeco AI’s real distinction lies less in any single capability and more in the continuity it offers across the preparation to application spectrum. It aims to sit alongside daily study sessions, guide reasoning through problems, and then quietly keep the job funnel active in the background.

Handled well, this reduces the distance between intention and execution: fewer logistical obstacles, more focus on deep work and real conversations. Handled poorly, it risks becoming a comfort blanket, something to subscribe to in the hope that tools can replace the uncomfortable parts of growth.

The healthiest framing is simple: treat Leeco as a specialised co‑pilot. Let it handle context, suggestions, and repetitive motions, while strategic decisions, ethics, and sustained effort stay firmly in human hands.

Comments