The rapid proliferation of Large Language Models (LLMs) has fundamentally altered the economics of information. We have moved from an era of information scarcity to one of absolute abundance, where the cost of generating text has effectively dropped to zero. However, this technological leap has introduced a subtle but profound crisis in digital publishing: Linguistic Homogenization.

As generative tools become the primary architects of digital drafts, the unique rhetorical "fingerprints" that define human expertise are being smoothed over by statistical probability. For digital publishers and institutional archives, the challenge in 2026 is no longer just production volume—it is the preservation of authority and authentic human resonance.

The "Probability Trap" and the Erosion of Trust

To understand why machine-generated text often feels "hollow" to a human reader, one must look at the underlying mechanics of predictive text. LLMs operate by selecting the most likely next token in a sequence, a process that naturally favors the "average" of human thought. In linguistics, this leads to a lack of Perplexity and Burstiness—the erratic sentence rhythms and unexpected metaphors that characterize lived experience.

When digital ecosystems are saturated with this "predictive monotony," audiences begin to experience what psychologists call Semantic Fatigue. According to research discussed by organizations such as the Poynter Institute, readers are increasingly subconsciously flagging clinical, overly-perfect prose as less trustworthy. In a world of automated noise, the presence of a "human signature" has become a premium asset for brand authority and long-term discoverability.

Forensic Quality Control: The Diagnostic Utility of the AI Checker

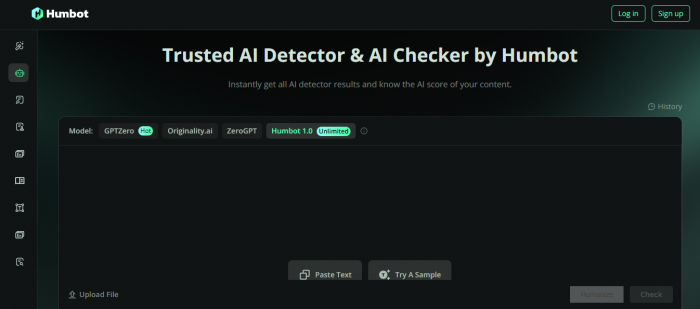

In a professional editorial workflow, the first stage of quality assurance is no longer just a grammar check, but a structural audit. Utilizing a sophisticated ai checker has become a standard procedure for identifying the "statistical signature" of a machine-generated draft. Rather than acting as a punitive gatekeeper, these diagnostic systems function as forensic tools for editors.

By measuring the entropy and structural predictability of a manuscript, an audit allows creators to pinpoint exactly where the narrative has become too clinical. This data-driven feedback highlights the sections where the author's unique intellectual voice has been obscured, signaling a need for deeper human intervention. This process ensures that content meets the evolving E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) standards established by major search engines and digital repositories.

Restoring the Human Signature: The Art of Linguistic Friction

The true challenge for modern creators lies in moving beyond the raw output of an algorithm to restore narrative vitality. To effectively humanize ai text, one must perform a structural recalibration that goes far beyond basic synonym replacement or manual rephrasing. It requires the re-injection of "stylistic friction"—the intentional variation in sentence length, the nuanced application of idiomatic depth, and the inclusion of subjective insight that machines naturally omit.

This is the specialized niche where platforms like Humbot operate. Rather than simply "spinning" content, these technologies focus on deconstructing the rigid, robotic syntax of a draft and rebuilding it with the rhythmic diversity required for deep engagement. This refinement process ensures that the final output doesn't just pass a technical audit, but actually resonates with the human brain, which is biologically tuned to respond to original and unpredictable communication patterns.

Toward a Sustainable Hybrid Editorial Framework

As we look toward the future of digital media, the most resilient strategies will be those that embrace a hybrid model. The goal is not to reject automation, but to master it through a tiered approach:

1.Foundational Drafting: Utilizing LLMs for the structural heavy lifting, such as data aggregation and initial conceptualization.

2.Diagnostic Auditing: Employing forensic tools to identify clusters of low-entropy text that lack original character.

3.Linguistic Optimization: Applying a humanization layer to restore the complexity and authoritative weight essential for high-level publication.

The Premium of Authenticity

In an increasingly automated digital landscape, the highest form of technical sophistication is the one that remains undeniably human. The future belongs to those who view technology as a collaborator, using it to handle the volume while reserving the "soul" of the story for the creator.

By prioritizing linguistic diversity and employing rigorous humanization processes, we ensure that the democratization of information through AI does not lead to a silent erosion of intellectual individuality. Ultimately, the most valuable currency in 2026 is not the speed at which we speak, but the authentic connection our words build with the world.

Comments