In the evolving landscape of digital communication, we are witnessing a strange cognitive friction. While generative artificial intelligence can produce thousands of words in seconds, readers often report a sense of "uncanny valley" when consuming machine-generated text. It isn’t always a matter of factual accuracy, but rather a failure of Linguistic Resonance.

From the perspective of cognitive psychology, the human brain is an advanced pattern-recognition engine. We don't just read words; we subconsciously track the rhythmic variance and "entropy" of a narrator's voice. According to research on digital reading patterns by the Nielsen Norman Group, readers tend to scan content in an F-shaped pattern, but they subconsciously disengage when the text lacks the structural friction and "burstiness" that define human thought.

The Mechanics of "Algorithmic Monotony"

Most AI models are built on high-probability word selection. In a technological sense, they are "too perfect." They lack the sudden shifts in sentence cadence, the intentional use of idiomatic friction, and the rhythmic diversity that characterizes lived experience. For business leaders and digital publishers, this creates a significant risk: if your content is too predictable, it becomes invisible to the human psyche—a state often referred to as Cognitive Glaze.

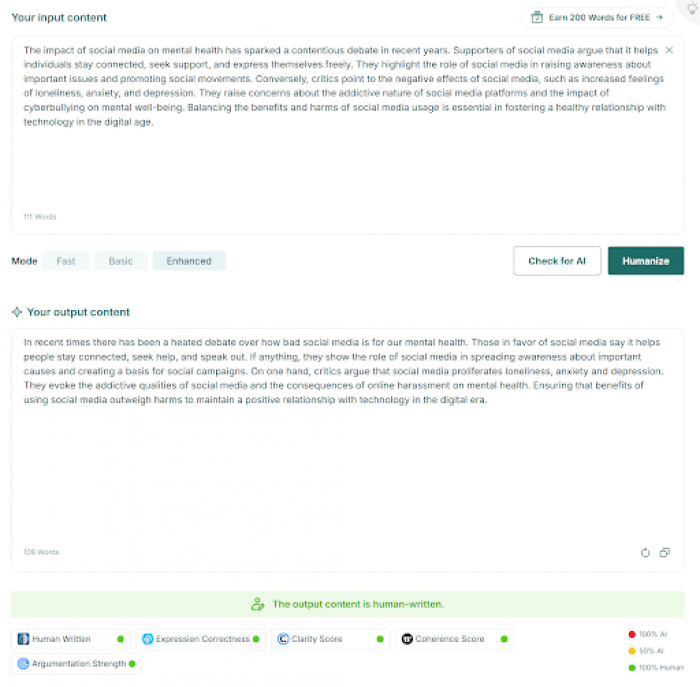

To bridge this gap, many professional creators have begun integrating advanced AI humanizer technology into their publishing pipelines. The objective isn't merely to alter the words, but to re-introduce the cognitive cues that signal "human presence" to a reader’s subconscious. By breaking the mathematical regularity of the machine, creators can ensure their message resonates rather than just being processed as noise.

The Evolution of Search: From Keywords to Semantic Entropy

As search engine algorithms evolve, the focus has shifted from mere keyword density to the evaluation of "information gain" and semantic quality. Modern systems are increasingly adept at identifying the difference between low-effort synthetic filler and high-authority human insights. This technical shift mirrors our biological preference for linguistic diversity; algorithms now reward content that exhibits the complexity and "entropy" associated with expert authorship.

In this context, achieving a high rank is no longer just about information volume, but about the quality of the linguistic delivery. This is why the use of a ai humanizer has become a standard practice for maintaining digital visibility. By ensuring that the prose retains its structural integrity and human-like unpredictability, publishers can align their assets with both the technical requirements of the algorithm and the psychological needs of the reader.

Why Heuristics Matter in Digital Content

Search engine algorithms and human readers are increasingly prioritizing "Experience" and "Authoritativeness." When a content draft feels clinical, it fails to trigger the heuristics that humans use to assign trust. This phenomenon is closely linked to how our brains process novelty and information density. As noted in various studies published in Nature, the human neural response is significantly more engaged when presented with varied and unpredictable linguistic structures.

In the search for excellence, many editors now rely on the best AI humanizer tool to perform a structural audit of their drafts. This process involves more than just synonym swapping; it is about deconstructing the statistical regularity of a machine-draft and rebuilding it with the erratic, high-entropy structures that define human expertise. This ensures that the content provides genuine value and stands out in an increasingly automated world.

Restoration: Beyond the Raw Output

For a digital asset to hold long-term value, it must provide a "human signature." This is why a top-rated AI humanizer has become an essential component of the modern content stack. By restoring the rhythmic diversity that algorithms naturally smooth over, these technologies ensure that a brand's message isn't just "seen," but truly absorbed.

As we move toward a future where AI handles the heavy lifting of data synthesis, the final layer of humanization becomes the most critical step in the creative process. It is the difference between a simple data dump and a resonant narrative that builds lasting connection and authority.

The Premium of Authenticity

In 2026, the ultimate premium in the information market is Attention. As automated text becomes the baseline, the ability to break through the "Cognitive Glaze" with authentic, nuanced, and rhythmic language is a strategic superpower. By utilizing a sophisticated humanization layer, we ensure that technology serves to amplify our voices, rather than drowning them out in a sea of predictable syntax. In an era of perfection, it is our uniquely human textures that truly command the room.

Comments